body .embed-container{

width:80%;

margin:12px auto;

margin-top:85px;

padding-bottom:56%;

}

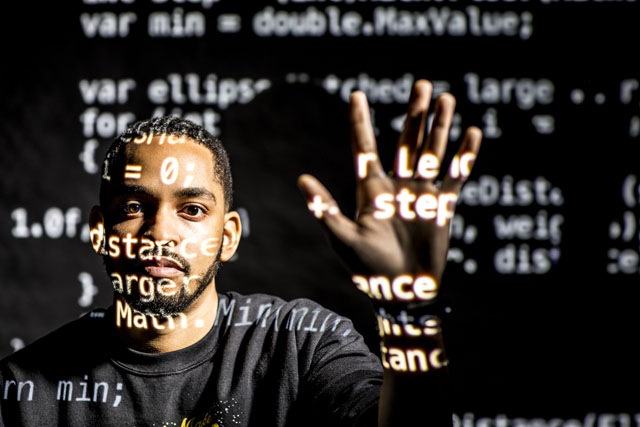

“I’m pretty sure if you went around and asked, ‘Hey, what do you think a touch is?,' you might get different answers, but it would probably converge around something that looks like this,” says Martez Mott, raising his pointer finger before tapping it on the table in front of him. “For the participants in my studies, that was not the case at all.”

Mott is a Ph.D. candidate in the UW’s Information School and an active member of the philanthropy-supported MAD (Mobile + Accessible Design) Lab and the DUB (Design. Use. Build.) Group, where his research centers on making touch screens accessible for people who live with motor impairments, from cerebral palsy to muscular dystrophy to Parkinson’s disease.

“Touch screens were developed for a certain type of use,” says Mott, who received financial support through the University of Washington Graduate Opportunity Award. It’s simple: If you want to interact with something on your device, you have to be able to extend a finger and touch it cleanly and accurately. “That’s it,” he says. “Everything else is relegated to some other type of functionality — if you touch your phone with two fingers, it thinks you’re trying to zoom.”

For those who live with cerebral palsy, however, touch might look like patting the screen with the back of the hand, or dragging a palm or a fist. “When that happens, the screen picks up a big blobby area,” says the Detroit native, “and the system has no idea what to do with it.”

Yet.

Imagine a system that adapts to touch — whatever that might look like. Enter: Smart Touch, a software that would allow people with motor impairments to pick up a device and, with a simple calibration procedure, be able to use it without needing additional specialized technologies.

Think of it as the counterpart to automated speech recognition, where the device asks you to repeat a few phrases so it can pick up on your unique speech patterns. In Mott’s model, the device asks you to touch a crosshair so it can pick up on your unique touch patterns and adjust how it responds.

The project is a work in progress, constantly being tested and tuned by Mott and a small team of researchers including his advisor, Associate Professor Jacob Wobbrock, and it’s all guided by Mott’s participants, who give him real-life, real-time feedback.

Two years ago, at the start of the project, Mott lugged a table-sized touch-screen device into Provail, a local center that helps people with disabilities pursue the lives they want to live.

There, Mott worked to make Smart Touch a reality, beginning by asking volunteers to hit targets on the screen. One of them was Ken Frye, a DJ with cerebral palsy who’s been in the radio industry for over 40 years. Today, Frye hosts his own radio show using older technology, but he says being able to use a touch-screen device would make his job much easier and faster.

The stories of the participants varied, but each one was equally important to Mott’s research. “I had a wide range of participants, so I saw a wide range of strategies used to complete the tasks,” he says, stressing that this is why inclusive innovation — actually looping the software’s target population into the design process — is so important. “It was difficult to get an understanding of what ‘touch’ looks like.”

Mott says people used the software in ways he couldn’t have predicted, which resulted in a lot of trial and error. But failure is part of the learning process, and as he creates newer versions of Smart Touch over the next few years, the iterations will only get better.

“The hope is that you could buy a device, turn on an accessibility setting, and then go through a calibration procedure for better touch performance so you can use your phone or tablet however you like,” says Mott, who’s slated to wrap up his dissertation by 2019.

Rolling it into the market is a few years away, but Mott has a vision, starting with buy-in from the tech industry. “I would love to see every touch-enabled device — public or private — be accessible, with this technology integrated into every operating system, quietly running in the background. I want to see a check-out kiosk at the grocery store embedded with intelligence that can infer people’s behaviors based on their unique touch,” he says. “We can do it.”